In the last months I have encountered more than once discussions about email security while browsing Lemmy. I have seen similar discussions in the past more than once on Reddit and in most cases the chain of events was somewhat like this:

- Proton, Tuta or some other email provider publishes a blog post that gets shared.

- In the comment section for these posts usually there are people claiming these are just honeypots for whistle-blowers and the like, that keys are stored in the cloud and this is no-bueno, that you should do everything yourself, that they can give stuff to law enforcement, that nothing is needed as TLS is used and more.

- Many people argue using more or less good arguments, but without a shared understanding about what the goal is.

- Many people seem to think of security as something that is either achieved or is completely missed.

What I want to do with this post is to hopefully help making some order in the messy discussions that usually happen around this topic. To do this, I will build a threat model, discuss the risks of the different approaches and then let everyone take the decision that fits their needs and risk appetite.

My hope is that this post is something that people can refer to in the future when discussing this topic to facilitate mutual understanding and rational discussions.

Let's start with the bare minimum of background information.

Introduction

Email is a very old service, and like most of the old services, it was designed in a time when Internet was basically a small club. This in practice means that not much thought went into building security into it, and a lot of the security had to be bolted later on, not always successfully.

One of the most interesting features of email is that is based on a distributed protocol (which is why it is often used as an example to explain ActivityPub and the Fediverse to people who are rightfully confused): there are many email servers in the world, or at least there used to be, and they can all talk to each other via some standard language (the Simple Mail Transfer Protocol, mostly) and deliver messages to each other's inboxes. However, all these servers deal with messages in plaintext, which means your email can be read at least by the email providers of the sender and the recipient(s) of the message. This is honestly something which is both a legacy of a different time and a feature much loved by Google and the other handful of providers that can offer free mailboxes in exchange for reading your emails to then sell your data or profile you (or both).

Given that emails are just a simple way to move text, securing it only means a few things:

- Messages are delivered to the intended recipient(s) only.

- Nobody else besides the recipient(s) (and the sender) should be able to access the content of the message.

- The message should be delivered exactly how it was sent.

- Ideally it should be possible to verify that the email was sent by who claims to be the sender.

Given how the protocol works, you can see that in the list above nothing is done about metadata. The servers will -in any case- have to know who is the recipient of the email, or will be able to log the time this was sent etc. Protecting metadata is a non-goal of securing emails, because it is fundamentally at odds with the way email protocols works. For a discussion focused on privacy, you can have a look at this article.

To provide the above security a standard was created to encrypt/decrypting emails, using something called Public-Key Cryptography.

The Basics of Public-Key Cryptography

In case you are not familiar with public-key cryptography, you can visit the Wikipedia page linked, but I will try nevertheless to provide the simplest explanation that hopefully allows to understand the rest of this post.

In public-key cryptography a "key" is actually a set of 2 keys. There are different mathematical ways to generate them, which are definitely out of scope here, but the end result is that they work "together": one key can decrypt the data encrypted with the other key. This is quite different from our usual way to think about cryptography, where both encryption and decryption operations are performed using the same key.

Why they came up with this way to encrypt stuff? Well, it turns out that having 2 keys working together is quite convenient; you can call one key "Private key", meaning this is personal and needs to be kept secret, while you can call the other "Public key". Not only you can share the public key, the more public it is, the more people know that this is your key, the more secure this whole mechanism is. Assume that now you want to send a message encrypted to Joe (sorry, Alice and Bob are busy): all you need to do is to encrypt the message using Joe's public key. The resulting message can be decrypted by Joe's private key, and only by that, so Joe is the only one able to read it.

The above mechanism is the basic for public-key encryption, and pay attention to the fact that a lot of the security relies on the fact that you can actually get Joe's public key from a trusted source! However, when Joe receives the message he has no way to know that the message actually arrived without alteration or that it was actually you. Once again public-key cryptography can help here, with a bit of help from some other mathematical functions. The gist of it is that these mathematical functions can take an input and spit out a "hash", which is a string of fixed length (depending on the algorithm used) that in some way "identifies" the input (same input = some value, different input = different value). All you need to do then is to encrypt the value calculated on the message using your private key, and send it alongside the message. Once Joe receives this piece (which is called Digital Signature), he can decrypt it using your public key, which is hopefully available to him, and he can compute independently the same "hash", using the same algorithm. If the value he gets matches the one that he obtained decrypting your signature, it means that the message was not tampered. Additionally, the fact that your public key was used to decrypt the signature also proves that your private key was used to produce it, which means now Joe knows that it was you sending the message.

PGP Saving the World

The email security standard that relies on public-key cryptography is called PGP (naming here is messy, PGP was a program, OpenPGP is a standard, GPG is another program, whatever). Based on what we just discussed, it seems that PGP provides exactly all the security we need for emails, so shouldn't we all done and satisfied? Well, not really. There are a number of problems introduced by using PGP. For example, if every message is encrypted when it reaches our inbox, it means that to read it we need to use our private key. If we want to read messages on multiple devices (say, our phone and our computer), then we need to have this key available on all the devices. Additionally, all our email clients need to be able to understand PGP and perform decryption/encryption for us. Also, how much do we like to search emails for content? Well, if the messages are only decrypted by the clients, then these are the only places where we can actually search. Finally, how do we get people's public keys?

All these problems combined with the fact that most people have mailboxes in one of those handful of providers which they access through the browser (webmail), means that a very, very, very little fraction of the email users actually use PGP. Google and other providers have no interest at all in first class support for PGP as this would mean they wouldn't be able to read our messages, which is the whole reason why they are offering the service for free on the first place. On the other hand, the very low adoption of PGP also means that the tooling around it is...not really friendly. It is not rocket science, but realistically people who are not very technical will struggle quite a lot to achieve a functional setup on multiple devices.

This is the situation today, and I want to highlight this, as it is useful to understand the landscape when building threat models.

First Steps for the Email Threat Model

I am not sure how many people are familiar with threat models, or better, with the formal way to describe threat models. Most people are familiar with the concept for sure, even if they are not aware it is called this way. For example, when we leave our house to go on holiday somewhere, we usually think about turning off the water, to avoid accidental flooding. We also may ensure to lock the door using all the locks, seal the windows and blinds, store some valuable objects that are usually kept on our desk in a safe, and so on. The process around all these considerations is basically an informal threat model.

Threat modeling is an activity that is performed to understand what kind of threats exist for certain assets (i.e., things we care about), who might be there to enact those threats (threat actors) and what security measures (controls) we have to prevent them.

I won't provide a primer for threat modeling, because I'd rather use this whole post as a concrete example. However, many resources are available online, and many methodologies exist to perform threat modeling activities. In this post I won't be too formal, but I will still follow a process.

Step 0 - Painting the Wide Picture

Before we even start with the actual threat model, we need to build the general picture of what happens when we send an email (the process for receiving an email is a mirrored version). In this case, I believe a picture is worth a thousand words, so I will start with that and add some comments later.

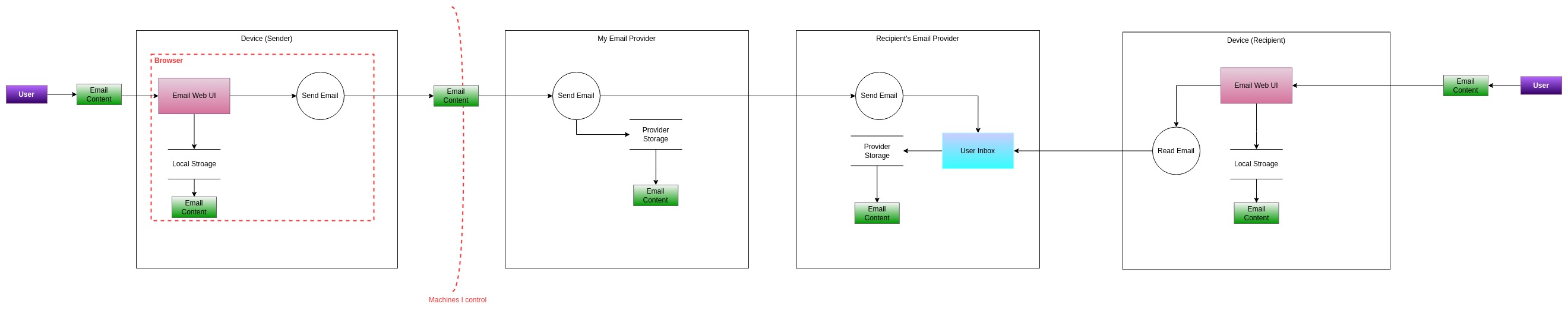

This is the most basic setup, without PGP or anything else (e.g., TLS). So what do we have in this picture?

- We have a very symmetric setup, with 4 main "boxes" representing (from left to right) our device, our email provider, the email provider for the recipient and the recipient's device.

- In the picture entities are represented with rectangular colored boxes, processes are represented with circles and data storages are represented with 2 horizontal parallel lines. Arrows indicate data flows, while red dashed lines indicate trust boundaries.

- The user on the left sends the email content (plaintext) through their email web UI (or any other client). This data flow crosses the trust boundary as now the content is available within the browser.

- The "send email" process is kicked off, and it requires the client sending the email content to the email provider. This data flow crosses another trust boundary, as at this point the email content is outside the devices we control.

- Our email provider receives the email, and depending on the recipient has to decide where the email has to be sent to. To make a generic case, we can assume that the email provider is different between the sender and the recipient, but if this was not the case, then the email would be delivered directly to the recipient's inbox.

- Our email provider sends the email to the recipient's email provider. This in turn sends the email to the user's inbox (still within the provider).

- Finally, our recipient access their inbox via their own client and the "read email" process.

Note that I didn't add every trust boundary. Technically it could be possible to consider our recipient's device somewhat more trusted than the email providers, or our email provider more trusted than the recipient's one, but for the scope of this conversation, this has little to no impact, so I decided to keep it as simple as possible. Likewise, the diagram is not supposed to be an accurate technical representation (for example, even our sent email goes into our mailbox), but it's just a tool to model the process at a very high level.

Step 1 - Identify the Assets

We will start our journey with the simplest activity (in this case!): understanding what are we trying to protect. In case of emails, the answer is pretty simple: the content of our emails. Pay attention to the word content because it makes a huge difference. If you consider valuable protecting the entire email (including metadata such as recipient, sender, date, etc.), then you can skip this whole process as the conclusion is very simple: there are no controls that can protect metadata for emails, so you should use other tools/protocols that can provide that (e.g., Signal, Matrix, etc.). The subject of the email is also generally not secured (i.e., encrypted), at least as part of the PGP standard.

Given the simplicity of the context, the asset list is very simple:

| Asset | Description |

|---|---|

| A01 | Email content sent (and received) |

Step 2 - Identify Threats and Threat Actors

Once we have a clear(er) idea of what are we trying to protect, and what is the landscape we are operating in, we can start brainstorming about who is likely to attack us, and what "attacking us" actually means (i.e., what could they do).

Usually threat actors are relatively known categories, so we don't need to make up the list, however, different actors might apply more or less to your scenario!

| Threat Actor | Name | Description |

|---|---|---|

| TA01 | Career Cybercriminals | Common cybercriminals who aim to steal data or information for financial gain. Usually they bet on quantity over quality, with moderate funding and technical skills |

| TA02 | Hacktivists | People motivated by social and political reason. Generally low funding but potentially high motivation. Technical sophistication can greatly vary |

| TA03 | State-sponsored actors | Highly funded groups that act on behalf of a nation-state. High technical capabilities and resources. They generally act outside the legal framework |

| TA04 | Law Enforcement | Decently funded and technically sophisticated. They can use legal processes to coerce entities in helping investigate |

| TA05 | Family Members | People with very different level of motivation and technical skills but deep knowledge of you and your habits, and physical access to your device(s) |

These are just some examples, but hopefully the idea is clear. If you are a journalist who is trying to protect your sources, an insider aiming to leak documents, or someone trying to protect your intellectual property from competitors, you might want to keep in considerations other threat actors that are specific to your use case.

For most of the people reading this post, I am going to assume that the main actors they should worry about are the career cybercriminals, nosy family members (or abusive) and in some cases law enforcement (although if you are doing something illegal, hopefully you didn't have to read this from a post on this blog).

Now let's try to think about what can these actors do, and we will add these threats to the diagram as well later. To do this, I will use as prompt the STRIDE acronym, which gives the name to a whole methodology for threat modeling. The acronym stands for Spoofing, Tampering, Repudiation, Information Disclosure, Denial of Service, Elevation of privilege. Note that the threats below don't take into account any control (like TLS).

| Threat | Type | Likely Threat Actors | Description |

|---|---|---|---|

| T01 | S | TA01, TA03, TA04 | An attacker could spoof my email server and trick my client to send it the email (leading to information disclosure) |

| T02 | S | TA01, TA02, TA03, TA04, TA05 | An attacker could impersonate the intended recipient and trick me to send an email meant for another person to them (leading to information disclosure) |

| T03 | T | TA03, TA04 | The message sent could be modified by the email servers |

| T04 | T | TA03, TA04 | The message sent could be modified by an attacker who is able to intercept traffic between me and my mail server, or between mail servers, or between my recipient and their email server |

| T05 | R | TA05 | The recipient could deny the reception of my email |

| T06 | I | TA01, TA02, TA03, TA04, TA05 | An attacker with physical access to my device(s) could access the content of the email |

| T07 | I | TA02, TA03, TA04 | An attacker who can access the mail servers could read the content of the email |

| T08 | I | TA02, TA05 | The recipient of the email could disclose the content to unintended parties |

| T09 | I | TA01, TA03, TA04 | An attacker who compromised my device could access the content of the email (e.g., Keylogger, access to data) |

| T10 | I | TA03, TA04 | An attacker who compromised my client software (e.g., remote vulnerability, malicious update) could access the content of the email |

I will ignore at the moment Denial of Service and Elevation of privilege threats because they are not really relevant in the context of this discussion. Note also that almost every thread related to Information Disclosure is also a Tampering threat, and vice versa. It is not very relevant to categorize threats, this is just a mnemonic tool to help us covering all necessary aspects, nothing more.

Step 3 - Adding Controls

The setup discussed so far is extremely basic, it doesn't contain even the bare minimum amount of security that nowadays is implemented by any mail provider. In particular, the main control missing from the design so far is the use of TLS (Transport Layer Security) between clients and servers and between servers.

TLS is just a way to establish a point-to-point encrypted channel between two parties (it also relies on public-key cryptography). I want to take a moment here to clarify one particular point which I have seen misunderstood many times in discussions around this topic.

Some people read about TLS and somehow confuse it with End-to-End Encryption (e2ee). I have seen some discussions in which people essentially said something like "e2ee email is a marketing gimmick, email is already end-to-end encrypted since it uses TLS".

I think the confusion is legitimate, because for regular web browsing, TLS can be considered an "end to end" encryption layer. The conversation is only between two parties (the client - our browser - and the server. In reality this is rarely the case) after all. However in the case of email, there are multiple communications and channels established. Each of these communication is encrypted, but that means that any party involved in any of these communications will have access to the data of the email. If we consider the usual meaning of "end" in end-to-end encryption, then the "ends" are the sender and the recipient. TLS sessions, however, are established between the sender's client and their mail server. The mail server will terminate the TLS session, decrypt the content, and establish another session with the recipient's mail server, which will establish yet another one with the recipient when they will access their email. Technically, it's not even possible to make sure that the recipient uses TLS when accessing their emails (although it's a fair assumption)!

It should be hopefully easy to understand that TLS here is a very important

security control, but it is only useful to ensure that nobody can intercept the

network traffic and access the email content, and to guarantee the

sender/recipient that they are interacting with their mail server.

In threat modeling terms, TLS as a control mitigates only threats T01 and T04,

by ensuring confidentiality and integrity for the messages in transit.

For ease of reference, this is the control list so far:

| Control | Description | Mitigates |

|---|---|---|

| C01 | TLS is used by clients to access mail servers | T01, T04 |

| C02 | email servers communicate with each other using TLS | T04 |

Edit (2024-08-25): I good point was raised by a Lemmy user, about the fact that still in 2024 there are indeed some email servers that do not use TLS. None of the mainstream email providers for private users are among the mix, but considering that it's not possible to know whether the recipient's server uses TLS, there are cases where not even these basic controls apply.

Email Threat Model for Most People

Once we add TLS to the picture, we get a realistic threat model for most people today which use providers like Gmail, Outlook etc., and do not use PGP.

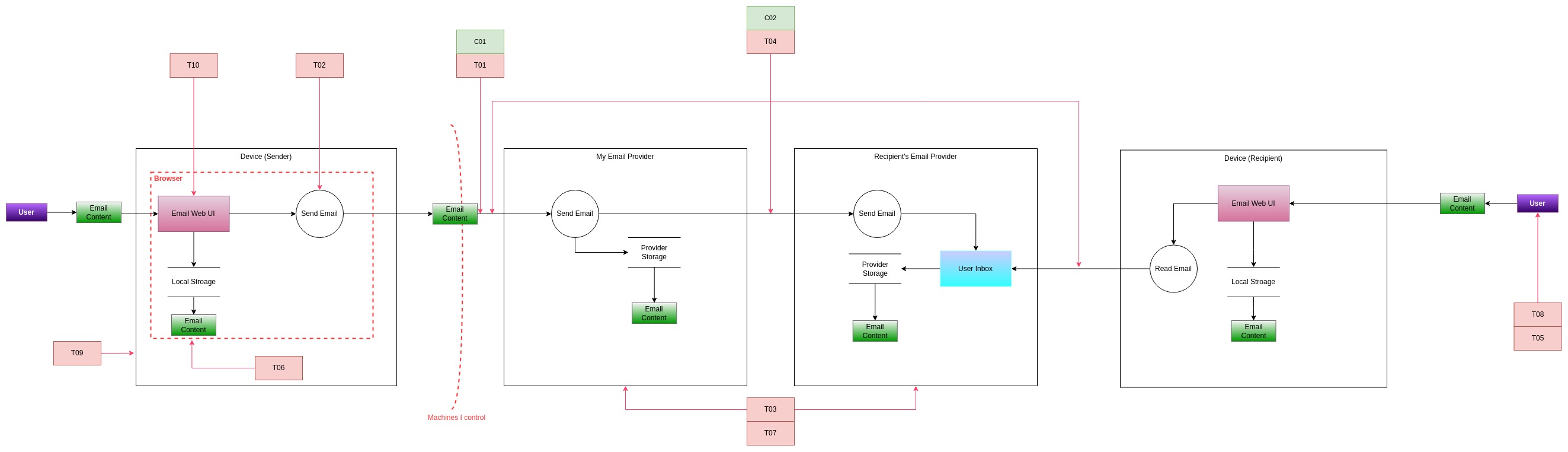

Let's update the diagram to reflect threats and controls listed so far.

The diagram above essentially shows that we still have plenty of threats that do not have correspondent controls. In fact, we are not done yet, and to dig even further we will need to add even more elements. However, at this point another disclaimer is necessary: threat modeling can be an unbounded activity. The moment you start mentioning a control (e.g., TLS), you could even start evaluating all the risks associated with that control (e.g., what if a Certificate Authority is compromised? What if an attacker injects a malicious certificate in your device CA list?). I want to make sure that the scope of this conversation is focused on email and the processes strictly related to it.

That said, there are differences between implementation of the same control (i.e., PGP), and since these are generally a very hot topic of discussion, I want to capture at least the biggest differences, so that everyone can then take a decision based on what threat model is acceptable for them. Unfortunately, this will require expanding the threat list and a more technical discussion.

Email Threat Model for People using PGP

As I mentioned, the tooling around PGP is not really friendly for many people without certain technical skills. For this, some companies tried to solve the problem by integrating PGP directly into their email service or rolling out similar encryption mechanisms, without impacting the user experience. Among these companies, some of ones I know are:

- Proton

- Tuta

- Mailbox.org

- Posteo

Even within their implementation there are differences, but without going too much into details, we can roughly derive two main approaches at PGP encryption:

- The first is the one where PGP is integrated transparently by the service provider. In this setup, the entity encrypting/decrypting the content is the same as the one delivering the emails. I will refer to this approach as "Transparent Encryption".

- The second is the one where PGP is integrated by some client-side tooling that the user chooses. In this setup the email provider doesn't perform any cryptographic operation. I will refer to this approach as "Custom Encryption"

Threat Model for Transparent Encryption

To model this architecture I will take as an example the design used by Protonmail, as this is the biggest "secure email provider", the one I use, and the one that I heard more controversy about. Other providers might have slightly different implementations (e.g., Tuta does not use PGP), but I believe the impact on the threat model is minimal.

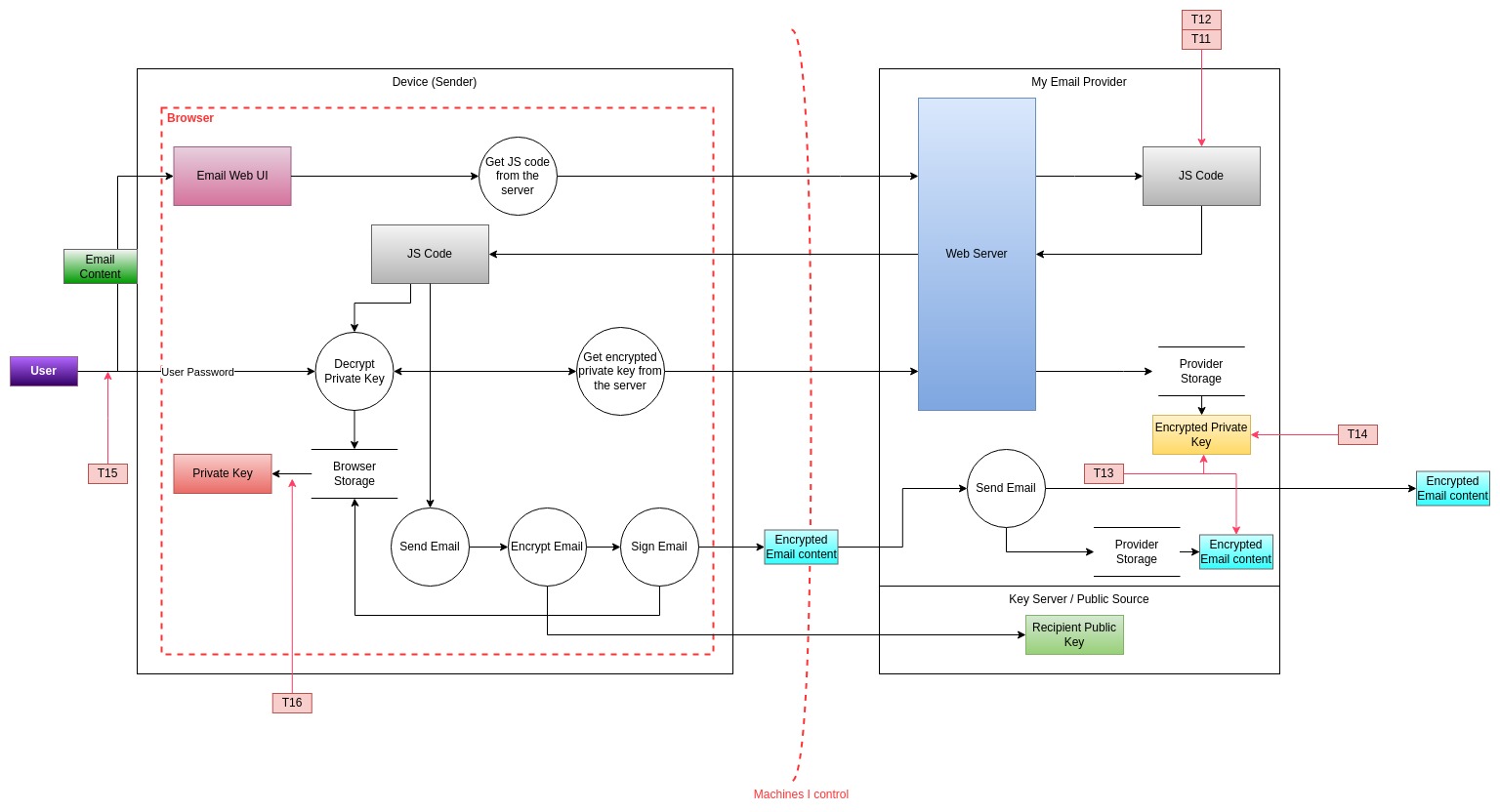

In this setup the content on the provider's servers is stored fully encrypted. The provider does not have access directly to the (private) key, but they store an encrypted version of the key, encrypted with the user's password. The email client is in most cases web based, which means the encryption/decryption operations are implemented within Javascript code that runs in the user's browser (it's also possible to have desktop applications that perform the same operations). This code fetches the encrypted key from the servers and uses the user password to decrypt content locally. This process allows for easy access from multiple devices, since the private key can be distributed by the server, even if the server does not have access to it directly (i.e., in unencrypted form).

The main point to highlight is that the encryption/decryption operations are performed by code written by the same entity which stores the encrypted data and sends the emails.

In this setup we can think of a few additional threats in addition to those already listed.

| Threat | Type | Likely Threat Actors | Description |

|---|---|---|---|

| T11 | T | T03, T04 | An attacker which compromises the email provider could publish a malicious update to the client software that would reveal the key or the data. |

| T12 | T | T04 | Law enforcement could coerce the email provider to deliver a modified version of the Javascript client to a specific user or sets of users, which would reveal the key or the data. |

| T13 | I | T04 | Law enforcement could coerce the email provider to provide them the user's data in encrypted form |

| T14 | I | T03, T04 | The encryption of the private key is done using weak ciphers and can be broken by an attacker who gets access to the ciphertext |

| T15 | I | T01, T03, T04 | The encryption of the private key is done using a weak key (e.g., user chooses a weak password), and can be broken by an attacker who gets access to the ciphertext |

| T16 | E | T01, T03, T04 | A browser or host vulnerability could lead to malicious code accessing data belonging to the email client |

Note that most of these threats are technically challenging, and most of them are generally not something an average cybercriminal (group) would be able to pull off.

Given that the only difference in this architecture compared to the one presented in the first threat model is in our machine and in our email provider, I will leave out from the picture at the moment everything else and focus only on these two areas. The resulting threat model is something like the following:

The picture started being quite messy, but overall it represents the following:

- We have 3 main parties: My device (box on the left), My Email provider (box on the right) and the Key Server (which often is the same as the email provider). The latter is used to discover public keys for individuals to whom we want to send emails.

- In our device we are running the client inside the browser, which is represented as a

trust boundary. This client (web UI) fetches the JS code from the provider to perform crypto

operations. The JS code performs all the necessary steps for sending an email:

- First, it gets the private key (encrypted) from the provider, it then uses the user password to decrypt it, and with it we can already access (for example) all our inbox.

- It then initiates the process for sending an email, including the encryption, which requires using the public key of the recipient, and signing using our own private key.

- The email content send to our provider is therefore encrypted and as such is transferred outside, towards the recipient's email server (not shown in the picture).

- The threats

T11-T16are then shown in the picture linked to the corresponding processes or data flows. - You can hopefully adapt the above model easily enough to similar setups in which the email provider uses desktop applications or a browser plugin to do the crypto integration.

So far we have focused purely on technical controls (TLS, public-key encryption), but to have a comprehensive discussion we also need to consider other types of controls, such as administrative ones.

In particular some controls that come to mind are:

| Control | Description | Mitigates |

|---|---|---|

| C03 | The email provider implements robust security measures which are certified through some recognized standard | T11 |

| C04 | Ciphers and algorithms used are documented and adhere to the best current practices | T14 |

| C05 | Password complexity requirements are implemented during registration | T15 |

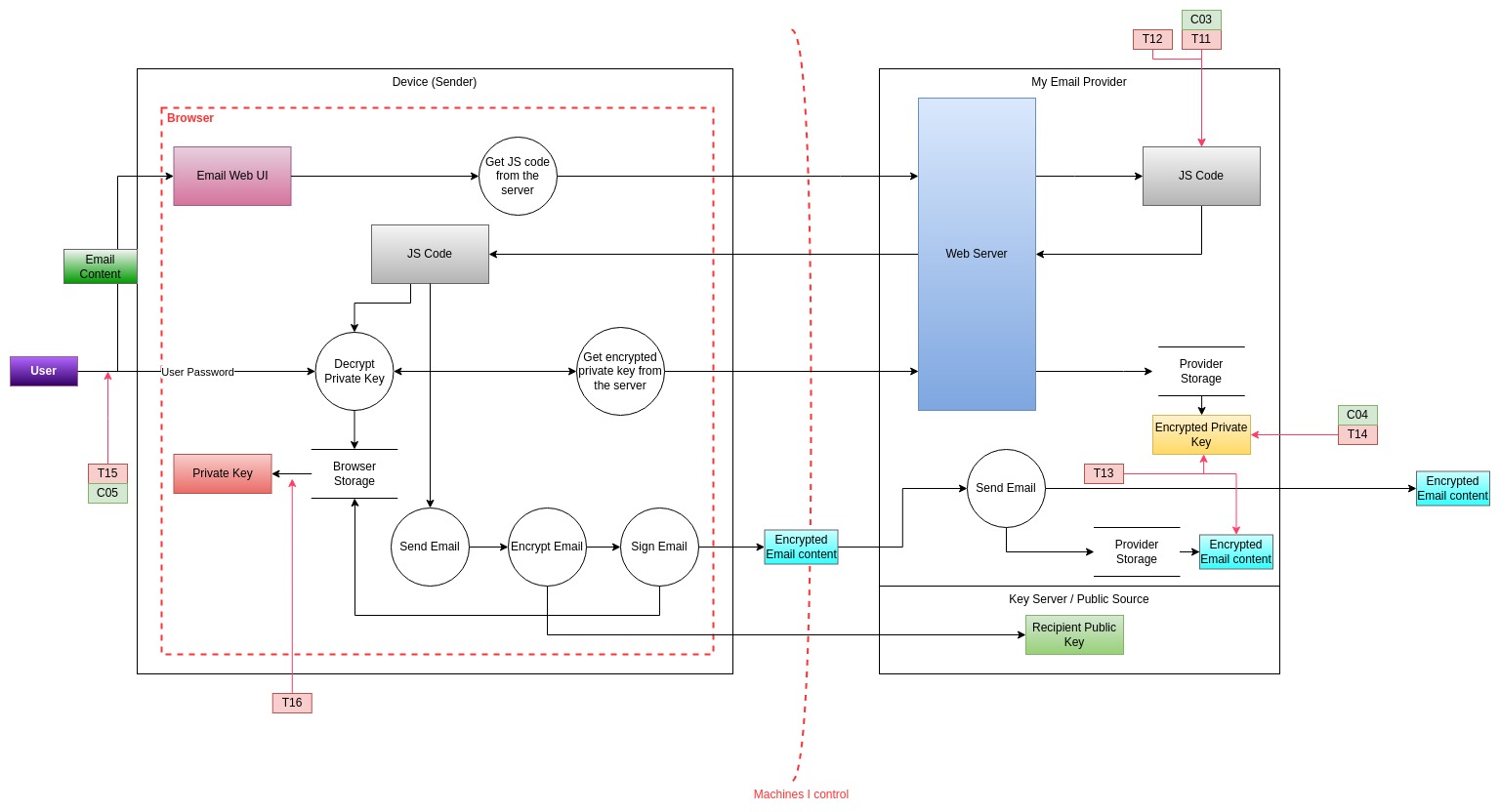

With these in mind, the resulting threat model is the following:

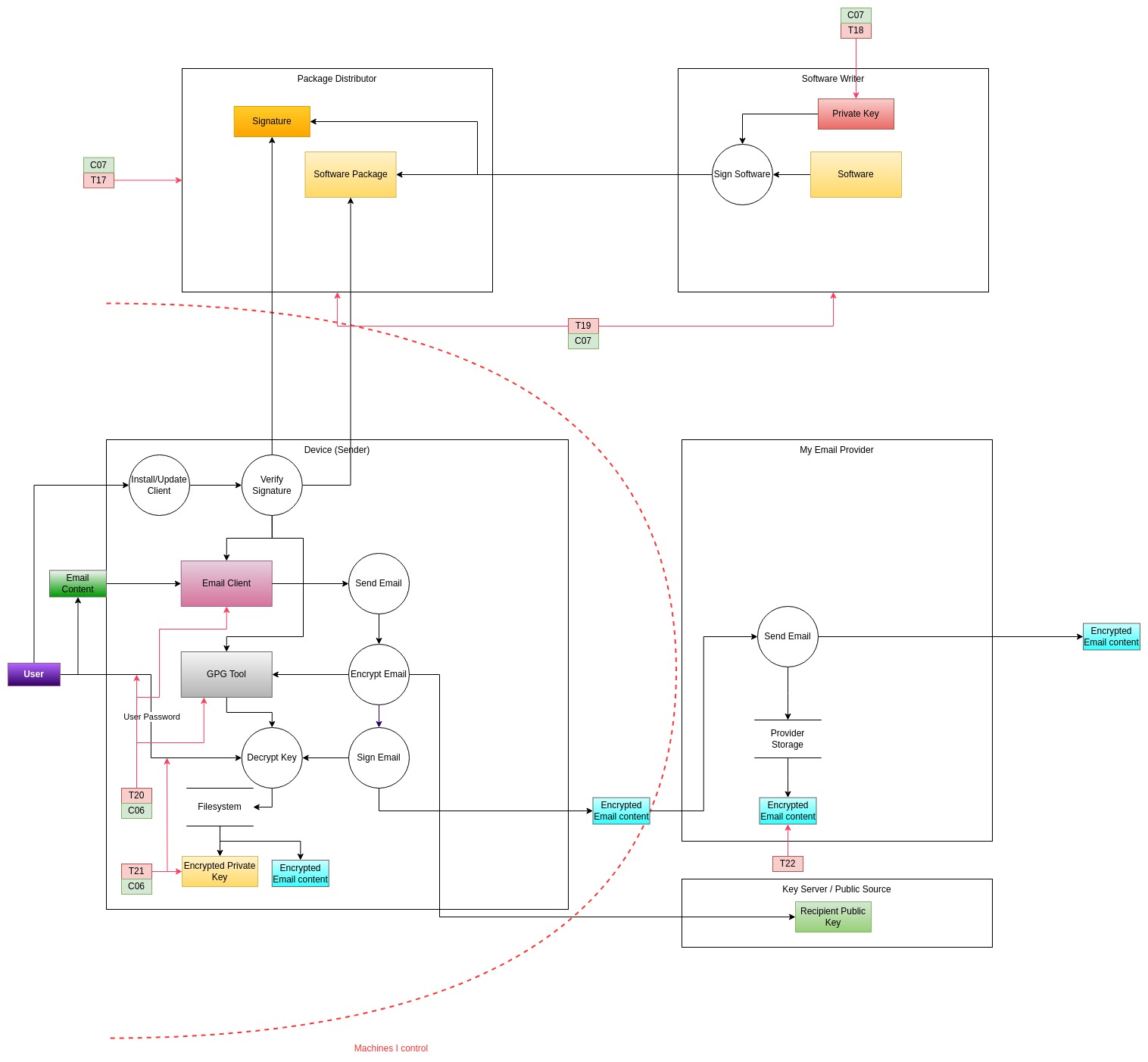

Threat Model for Manual Encryption Setup

This setup has - by definition - a huge number of variants. There are many tools that can be used to interact with servers via IMAP/POP3 and support PGP. It's also possible to roll your own (although not recommended), which means there are probably a lot of tools following different standards. To build a threat model I will make some assumptions that hopefully are generic enough and do not affect the outcome massively.

I am going to assume that the email provider simply offers IMAP/POP3 access. I will assume that the client software is installed using a package manager. I also assume that the keys (e.g., PGP keys) are stored locally on the device and they are protected with a password. Finally, I am going to assume good hygiene and the fact that the email (e.g., IMAP) password is also stored encrypted.

Based on these assumption, what are the differences with the "transparent" setup? Well, as you can imagine the main difference is that in this case the email provider never - for any reason - has access neither to the keys (not even encrypted) nor to the email content (unencrypted). In this case is the client software to have access to both the key and the email content. The reason why this may be a significant difference security-wise is that the client software is managed by a package manager, is downloaded without authenticating (i.e., identifying) yourself and is updated on-demand by the user, not at every access (unlike the Javascript code served by the email provider in the previous setup).

The process for signing/encrypting is fairly similar though: the user performs a login, the content of the message is typed in, the client tool prompts the user for the PGP password to sign the message, the private key is accessed and used to sign, the email is sent to the server which then sends it to destination.

Based on the above, we can identify the following threats:

| Threat | Type | Likely Threat Actors | Description |

|---|---|---|---|

| T17 | T | T03, T04 | An attacker which compromises the package manager (e.g., mirror) can serve a backdoored version of the client software |

| T18 | T | T02, T03, T04 | An attacker which compromises the software maintainer(s) key or repository can publish a backdoored version of the client software |

| T19 | T | T04 | Law enforcement can coerce a package maintainer or repository to publish a backdoored version of the software |

| T20 | I | T01, T03, T04 | An attacker with host access can phish the user's PGP password by emulating the client software behavior, revealing the private key or email password |

| T21 | I | T01, T03, T04 | The user configures PGP with weak ciphers or a weak password resulting in the key being accessed by an attacker who compromised the device |

| T22 | I | T04 | Law enforcement can coerce the email provider to disclose user's (encrypted) data |

Similarly to the transparent encryption setup, most of these threats require the compromise of an external party with the specific intent to compromise a user. Given the complexity and the resources required, these are not the types of attacks that regular cybercriminals would be able to perform.

Compared to the transparent encryption setup, where most of the setup is "outsourced" and therefore can't be really modified that much, in this case there are controls that can help against some of these threats that can be implemented by the users themselves. For example:

| Control | Description |

|---|---|

| C06 | PGP keys are stored only on a security key (e.g., a Yubikey) |

| C07 | The user's preferred tool is not immediately known to an external attacker (making it harder to target the distributor or the user specifically) |

It's important to note that C06 requires additional hardware and complicates

the setup quite a lot. Additionally, it might be quite hard to have proper

support on multiple devices. Also C07 increases the complexity (e.g., the

amount of reconnaissance needed) for attacking the user, but is by no means a

technical control.

The resulting threat model looks like the following:

Summary

With so many pictures and tables, I am sure someone might be rightfully lost. In this section I want to summarize the information presented so far, with a particular focus on the threats that apply to different setups.

For the sake of this discussion I have taken 3 main setups that represent roughly the main 3 options that people have today for their email setup:

Basic setup: this is what realistically most people use, the vanilla experience from the main email providers. No PGP in use, no custom tooling, just the bare minimum (i.e., TLS).Transparent encryption setup: this setup is what people using secure email providers (e.g., Proton, tuta) mostly get. PGP (or something very similar) is used transparently, no custom tooling needed.Custom encryption setup: this setup is an alternative to the above, where email and crypto operations are logically separated, performed by different tools. Custom tools are used in this scenario.

The following is a summary table for the threats that apply to the different scenarios (using IDs for brevity). The ❌ symbol means that a threat does not apply to the scenario, while ✅ means that the corresponding threat is valid for that scenario (thanks for pointing out that the use of symbols was ambigous):

| Threat | Basic | Transparent Encryption | Custom Encryption |

|---|---|---|---|

| T01 | ❌ | ❌ | ❌ |

| T02 | ✅ | ❌(as long as public key is from a trusted source) | ❌(as long as public key is from a trusted source) |

| T03 | ✅ | ❌ | ❌ |

| T04 | ✅ | ❌ | ❌ |

| T05 | ✅ | ❌ | ❌ |

| T06 | ❌(unless browser session is accessed) | ❌(unless browser session is accessed) | ❌(unless access after decryption) |

| T07 | ✅ | ❌ | ❌ |

| T08 | ✅ | ✅ | ✅ |

| T09 | ✅ | ✅ | ✅ |

| T10 | ✅ | ✅ | ✅ |

| T11 | N/A | ✅(partial) | ❌ |

| T12 | N/A | ✅ | ❌ |

| T13 | N/A | ✅ | ✅ |

| T14 | N/A | ✅(partial) | N/A |

| T15 | ✅ | ✅(partial) | N/A |

| T16 | ✅ | ✅ | ❌ |

| T17 | ❌ | ❌ | ✅ |

| T18 | ❌ | ❌ | ✅ |

| T19 | ❌ | ❌ | ✅ |

| T20 | N/A | ❌ | ✅(partial) |

| T21 | N/A | N/A | ✅(partial) |

| T22 | ✅ | ✅ | ✅ |

The above table doesn't tell the full story, obviously. We have seen that certain threats can be mitigated by corresponding controls, and also certain threats are very specific for what a particular setup entails, so do not make sense for other setup (read: taking the count of threats is a bad metric). It's also worth saying that not all threats are equally complex.

Having said that, it's still possible to make some summary conclusion for each setup:

- If you care about the content of your emails (even from a purely privacy standpoint), the basic setup using Gmail, Outlook & co. simply doesn't deliver what you want. While it's still relatively unlikely someone compromises Google/Microsoft's infrastructure to access your emails, the content of your emails is 100% accessed by someone else. I wish I could say "even if not for malicious purposes", but I really can't say in good faith that the advertising industry is not malicious.

- Using secure email providers means that a lot of trust is placed into the provider itself. A failure or a breach of the provider can result in the content of your emails being disclosed, which means you should choose a provider you trust, ideally with a good track record and some formal certifications that attest at least basic security. However, the attacks that are specific to this setup are complex and expensive. Unless you are a high profile target, it's very unlikely they will ever be relevant to you.

- Using custom tooling/encryption spreads the risk over multiple entities, while not fundamentally modifying the supply-chain risk. However, deliberate updates and the obscurity on the tools in use can make the attack vector harder to execute. On the other hand, software needs to be chosen very carefully because it's reasonable to expect large, established companies to have more robust security practices than the average maintainer. If you have the highest security requirements, this setup paired with additional security measures such as using security keys for crypto storage/operations, clean devices and a robust upgrade procedure is probably the most secure.

- Some threats related to emails are fundamentally impossible to mitigate. Every email we send is received by someone, and every email we receive is sent by someone. It's impossible to know what security practices the sender/receiver is adopting for sure, for example.

- If law enforcement (or a sophisticated attacker) is after you, emails are just not a suitable communication medium. Companies like Proton have been in the past forced by law enforcement to disclose some data. Even in cases that made the news, the data disclosed was minimal, and these are cases where anti-terrorism laws were used. However, it's not possible to know if additional and more secret requests are honored and the extent the providers are forced to modify their services. The theoretical possibility exists, and it is in my opinion more likely that law enforcement will engage with an email provider than with a software vendor (to produce a backdoored update).

- It's also incredibly hard to prevent people with physical access to our machines from accessing the email content. Controls that can help with this threat are simply good hygiene: lock your device, logout from your account, delete sensitive emails that are sent/received. This is unfortunately true for most communication tools, including more secure protocols (like Matrix or Signal).

Conclusion

After having looked in quite the detail to email setups, I want to offer my personal opinion on the trade-offs of the different setups to conclude this post.

Different people use email for different tasks and types of communication. Despite not leaking state secrets, I find the content of my email is generally private. "Private" in this case means that it's not a catastrophe if the content should be revealed, but I also don't want to reveal it by default.

Because of this I simply cannot recommend the use of mainstream email providers, like Gmail or Outlook. The fact that each email is analyzed and used to profile me (or something I don't even have idea is done) is simply unacceptable.

On the other hand, I use email daily, from many devices and purposes, and I want a setup which is fundamentally simple and requires as little friction as possible. As I said, I am not leaking state secrets, I am going to be an unlikely target for law enforcement or sophisticated attackers. The cost of stealthily compromising a secure email company is simply disproportionate compared to the gain from accessing my emails. Likewise, it's unrealistic to think some sophisticated attacker would target me specifically to the point that they will discover and then compromise the specific tooling I am using to access/encrypt/decrypt emails. Also, a $5 wrench could probably achieve the same goal in a quicker and cheaper way.

What I mean by this is that while some threats do exist, they are not really something I personally concern myself with. Each one of us can take the best decision based our needs and situation, however I want to reiterate something I mentioned early: I - and I think this apply to most people - mostly concern myself with a few threat actors. Personally I believe that in my case I need to protect myself mostly from the average cybercriminal looking for some money. From this perspective, all sophisticated threats which involve supply-chain compromise are out of the picture, making the choice between transparent encryption and custom encryption essentially irrelevant. If there are no substantial differences, then the best compromise between security and usability is using a secure email provider or the "transparent encryption". While this setup has a higher number of risks compared to a hardened custom encryption setup (i.e., using security keys to store keys and perform crypto operations), those threats are simply not relevant enough for me to warrant the loss in user experience.

This is the reason for which I am using Proton, which -like most other secure email providers- offers me an experience that is basically the same I used to get with Gmail but with privacy guarantees and a dead-simple PGP integration (including with people not using the same provider). I am personally happy with the service but you can also evaluate similar providers, decide based on jurisdiction, pricing, trust in the company, product offering and more. You may even be able to use some of the information in this post to adapt a threat model for the different implementations of the providers you are evaluating (which would make me very happy and I would love to hear about it!).

I hope anyway that this post provides enough context and details to clearly understand the process that I have personally used to choose my own email setup. Anybody can follow a similar process and ground the decisions that are often based on gut feelings on data and risk estimations - which, I remind - are always subjective. I also hope that this post will make obvious how arguments like "[PROVIDER] is a honeypot because it stores the private key" are generally delusional. Any program that is used requires trusting the developer(s) who wrote it, those who distribute and a wider set of entities (e.g., the CAs that make TLS work, the OS developers, etc.). There is no setup that doesn't require placing trust on someone, but you can choose who do you trust more. For some people, the bare fact that an entity is a company is a reason of distrust, for others instead the business incentive is a reason for trusting that entity.

As long as your choice is based on an evaluation of the risks that it entails, and is acceptable to you, it is the right choice.

If you find an error, want to propose a correction, or you simply have any kind of comment and observation, feel free to reach out via email or via Mastodon.